IntroductionCopied!

As we mentioned in our previous article, the main weakness of LLMs is their lack of knowledge on topics they have not been trained on, in such cases we rely on RAG. Thus, our goal is to build an application that answers the user’s questions about subjects that the LLM isn’t familiar with. This is accomplished by feeding the LLM with the relevant knowledge, i.e. text from the documents.

Initially, we’re not targeting a specific domain problem, such as processing medical, literary, or historical documents. Instead, we are building a solid foundation for document processing in general. Later, it can be used as a part of a more sophisticated application and easily upgraded.

Building the RAG applicationCopied!

Within our application, we've chosen to treat textual resources as an entity that we call a document. A document can be derived from any knowledge source, including PDF, HTML, and other text formats if they contain textual records. We will skip the transformation process and proceed under the assumption that this has already been done for us. To differentiate between documents, we require a documentId.

Image 1 - Document model

Firstly, we need some documents that contain the domain knowledge discussed earlier. We’re going to use a literary work by William Shakespeare as a reference. Starting with “Romeo and Juliet”, one of the questions could be “Is the Romeo and Juliet scene set in Verona?”. The objective is to ensure that our application can retrieve text relevant to a question from a document.

At this stage, we're not looking for a precise answer. A satisfactory piece of text may look like this:

“Two households, both alike in dignity, In fair Verona, where we lay our scene...”

The retrieved text might not be the only one that provides an answer to our question. For example, another relevant snippet of text might be:

“By thee, old Capulet, and Montague, Have thrice disturb'd the quiet of our streets, And made Verona's ancient citizens Cast by their grave beseeming ornaments...”

Let's define the requirements for input-output parameters and illustrate them to evaluate our current position. The question mark in the diagram below symbolizes the missing implementation that will be discussed later.

Image 2 - I/O solution diagram

The relevant snippets of text are retrieved and sent to the LLM in some form. The LLM will provide a user with the final answer.

The only thing we know about these snippets is their content, which can represent any subject and extend to any length. This definition is too vague to work with, so we need an additional constraint. This constraint will restrict the maximum length of the retrieved text. With the maximum length in mind, we are introducing the next building block – a chunk.

ChunkingCopied!

Obtaining chunks entails splitting the text of a document. This process is commonly known as chunking. In the basic case, we’re going to split the text by its maximum length. The following image illustrates the chunking of a text that's 250 characters long. In this example, each chunk has a maximum length of 64 characters.

Image 3 - Document chunking process

We’ve established that there can be multiple chunks that contain an answer to a single question. This approach opens new possibilities, as multiple chunks can be compared and ranked. Ranking requires a metric by which the chunks will be compared. This metric is called relevance, and it will play an important role in index retrieval.

We need to find a way to compare the chunks. From a historical perspective, we initially utilized primitive methods for comparing chunks based on syntax. However, there have been significant advancements, and now we can compare chunks of text by leveraging semantics. With that in mind, we’re adopting vector space representation for these chunks which enables effective comparison. This step requires transforming the chunks into vectors, which leads to the following transformation-related questions:

- How many features (dimensions of a vector) do we need?

- What does each feature of the vector represent?

- What is the mapping function that transforms text into a vector while preserving its semantic meaning?

EmbeddingCopied!

Luckily, we don't have to deal with these problems, as we can choose one of the existing machine learning models for the embedding process. This process is commonly known as embedding.

The terms vector and embedding are used interchangeably in the context of index retrieval. The importance of embedding models lies in their capability to save the semantics of the chunk within the vector.

Embedding models have a predefined maximum text input size and a fixed vector output size. Simply put, the input size can vary until it reaches the maximum, with any overflow being ignored. On the other hand, the output size remains fixed.

Image 4 - Embedding process

The current state of our application implies that chunks are saved in-memory, because we haven’t introduced permanent storage yet. This approach does not scale to large documents and production environments so chunks should be stored in a database instead. To incorporate what we’ve achieved so far, the database must be able to work with vectors. That being said, let’s briefly delve into the vector database.

Vector databaseCopied!

A vector database consists of collections. Each collection has a unique name and a specific vector dimension. Additionally, these collections contain entries as shown in the image below.

Image 5 - Vector database representation

Let’s discuss what we need to store in a vector database, i.e. determine the structure of an entry. The goal is to avoid repeating the process that will always result in the same outcome.

A prime example that seamlessly aligns with this story is chunking. Using the same chunking strategy on the same document will always yield the same result. The same principle applies to the embedding process. With all of this in mind, we end up with the following structure:

Image 6 - Database entry model

Once the embedding process is integrated and each chunk is saved with its corresponding vector representation, we can finally perform a vector search that will return the relevance for each chunk.

Hold on for a moment, we can’t perform a vector search because we haven't yet defined a function that outputs the relevance. The good news is that we don't have to implement it on our own since it’s already built into vector databases.

When it comes to vector search, several strategies emerge. Cosine similarity is one of the most widely adopted. The cosine similarity is determined by the ratio of the dot product of two vectors to their norms.

Image 7 - Cosine similarity (vector search)

Vector search operation completes the process of basic index retrieval. While there are some downsides, there is a lot of space for improvement. We can derive more complex strategies from this basic index retrieval, but we will save that discussion for later (future blog post about advanced strategies).

To keep it simple, we are just going to make small improvements to get familiar with additional concepts that will play an important role in more advanced strategies. The following image shows what we have done so far.

Image 8 - Updated solution diagram

ImprovementsCopied!

MetadataCopied!

To improve existing index retrieval, we are going to point out some of the challenges. For example, while searching through multiple documents a user wants to know the origin of each chunk.

Previously, we considered a single document - “Romeo and Juliet”. Now, we are going to extend it to include another William Shakespeare tragedy - “Hamlet”. Let’s consider a scenario where we pose the question: “What do the characters think about fortune?”, and get the following chunks:

“To be, or not to be: that is the question: Whether 'tis nobler in the mind to suffer The slings and arrows of outrageous fortune...” - Hamlet

“O Fortune, Fortune! All men call thee fickle: If thou art fickle, what dost thou with him That is renowned for faith? Be fickle, Fortune...” - Romeo and Juliet

So, the issue is that a user wants to know the source document of each chunk, but the only information available is the relevance and the chunk itself. We can address this by adding another building block on top of each chunk - metadata. Metadata is simply a JSON object that describes each chunk. It’s stored in the vector database along with the chunk.

How does metadata help us? During the chunking process of each document, we can fetch all the information about the specific document and persist it to our database so we can easily retrieve it later when doing vector search. After making this change, it's easy to serve all the metadata along with each chunk.

Chunk orderingCopied!

Metadata is useful for solving many problems. Once we've mapped a chunk to its source document, another common issue is the ordering of chunks within a document. Let's go through another practical example to fully grasp the problem and utilize the index retrieval once again.

All that retrieving made us hungry, so let’s cook something. We are going to make "Beef Stew", and here’s the recipe I’ve rewritten from my grandma:

“Dust the beef chunks with flour, ensuring each piece is evenly coated. Heat a heavy-bottomed pot over medium-high heat. Brown the beef in batches to ensure a crispy exterior. Deglaze the pot with red wine, scraping up the flavorful bits. Return the beef to the pot, now simmering in the wine. Add chopped carrots and potatoes to the mix. Pour in beef broth until the ingredients are just covered. Let the stew simmer for two hours, allowing the flavors to meld.”

We’re in a hurry, so we’re using basic index retrieval to prepare the dish faster! We’re starting with chunking the recipe. The maximum length for a chunk is 64 characters, so the first two chunks look like this:

“Dust the beef chunks with flour, ensuring each piece is evenly” “coated. Heat a heavy-bottomed pot over medium-high heat. Brown t”

Fixed-size chunking isn’t effective in this case as it can split in the middle of a word, causing a loss of information. Additionally, because the recipe instructions are short, we would prefer to have each sentence in a separate chunk. Therefore, we’re going to split the instructions by a dot, using the following regular expression: ". ".

With an updated chunking strategy, let’s vectorize the recipe and start retrieving results for the main ingredients. Searching for "beef", yields following results:

Image 9 - Retrieved chunks for search term "beef"

Then, as we started browning the beef, the grandmother arrived to prevent the forthcoming disaster. A heated discussion unfolded as she questioned our actions, and we explained we were following her recipe. Not convinced, she retrieved the original recipe from the shelf to show us the correct way:

- Dust the beef chunks with flour, ensuring each piece is evenly coated.

- Heat a heavy-bottomed pot over medium-high heat.

- Brown the beef in batches to ensure a crispy exterior.

- Deglaze the pot with red wine, scraping up the flavorful bits.

- Return the beef to the pot, now simmering in the wine.

- Add chopped carrots and potatoes to the mix.

- Pour in beef broth until the ingredients are just covered.

- Let the stew simmer for two hours, allowing the flavors to meld.

It seems like we have forgotten to rewrite the ordinal numbers. That’s why we couldn’t reconstruct the proper sequence of steps in the recipe! Having left my grandmother to cook, let's focus on rewriting the recipe. Halfway through rewriting the recipe, we’ve noticed that there are some recipes without any ordinal numbers! How do we cover this case as well?

A new challenge arose – keeping the original sequence of chunks in a document.

Previously, it was stated that metadata can be helpful, let’s try to use it again. The metadata already includes a documentId, by which the multiple recipes are differentiated. A new property to introduce as metadata is an index field. Each chunk should have a corresponding index within a document. The index will be determined during chunking. After retrieving the chunks, we can sort them by an index to speed up navigating the results. After applying the proposed changes, the chunking process now looks like this:

Image 10 - Document chunks with metadata

Results after applying the changes will look like this:

Image 11 - Updated retrieved chunks

The outcome is the correct order of steps. In addition, we can see what the missing indexes are between, for example, two and four, so we’re aware of the missing steps.

LLM integrationCopied!

So far, we've managed to retrieve relevant chunks that might contain the answer, but we haven't found the precise answer yet. Incorporating a feature that offers human-like responses would significantly boost the user experience. This can be accomplished by integrating our application with a large language model (LLM).

Introducing a LLM to our application means our application needs to send prompts to it. A prompt is a set of instructions for a LLM that will contain the user’s question and relevant context built from the chunks.

A new challenge arises: what is the exact procedure for combining chunks into context? Since we’re working with basic index retrieval, we will utilize the simplest strategy. This strategy is the concatenation of the chunks with a new line.

Image 12 - Final solution diagram

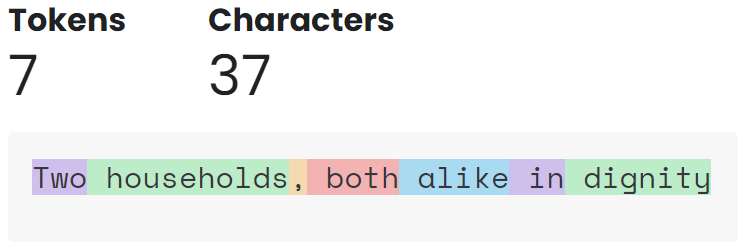

After incorporating everything we have described so far, we provide a prompt to the LLM. Communicating with LLMs introduces us to the concept of tokens, which determine the price of communication. Tokens are obtained through the process of tokenization, which is a method of parsing a text. A token is the smallest unit of data that carries information within a context.

According to OpenAI, the following sentence: “Two households, both alike in dignity” – W. Shakespeare, comprises of 7 tokens, as illustrated in the image below. In interactions with the LLM, we distinguish between two types of tokens, the input tokens and output tokens. Input tokens refer to the user's question, while the output tokens refer to the answer generated by the LLM. The latter incurs a higher cost.

Image 13 - Tokenization

Image 13 - Tokenization

ConclusionCopied!

In this blog post, we familiarized ourselves with the fundamental concepts of RAG. We identified several common practical challenges and devised solutions to mitigate them, aiming to flatten the learning curve.

Our approach was segmenting documents into manageable chunks, transforming text into vector representations, and leveraging a vector database for efficient retrieval. This establishes a strong foundation for AI systems to tap into extensive domain-specific knowledge pools.

The journey from raw text to semantically rich vectors illustrates the great potential of combining traditional document processing techniques with cutting-edge AI.

An emphasis was placed on vector search, which is crucial for enhancing the precision of information retrieval. Another important concept was metadata which is used for enriching the data with details necessary for more accurate vector search outcomes. This aspect is fundamental in navigating the complexities of domain-specific information, ensuring AI systems can retrieve the most relevant content. In addition, we mentioned how metadata can elevate the customization capabilities to a new level, which is a topic that will be explored in future discussions.

After learning about essential concepts, among which context stands out in particular, you can deepen your knowledge about it in the upcoming article – Context Enrichment.

SummaryCopied!

We navigated through the essentials of Retrieval-Augmented Generation (RAG), addressing common challenges to demystify the technology and ease the learning curve.

The process is divided into two parts: data ingestion and data retrieval.

- Data ingestion involves chunking documents, generating metadata, and embedding the chunks. All this information is compiled into entries. The process concludes with the entries being stored in a database.

- Data retrieval involves embedding the user’s question and querying the vector database. This yields a list of the relevant chunks that are consolidated into the context. The context is then injected into the prompt along with the user’s question, the prompt is sent to the LLM, the LLM does it's reasoning with the provided context, and finally, the LLM returns a human-like answer.